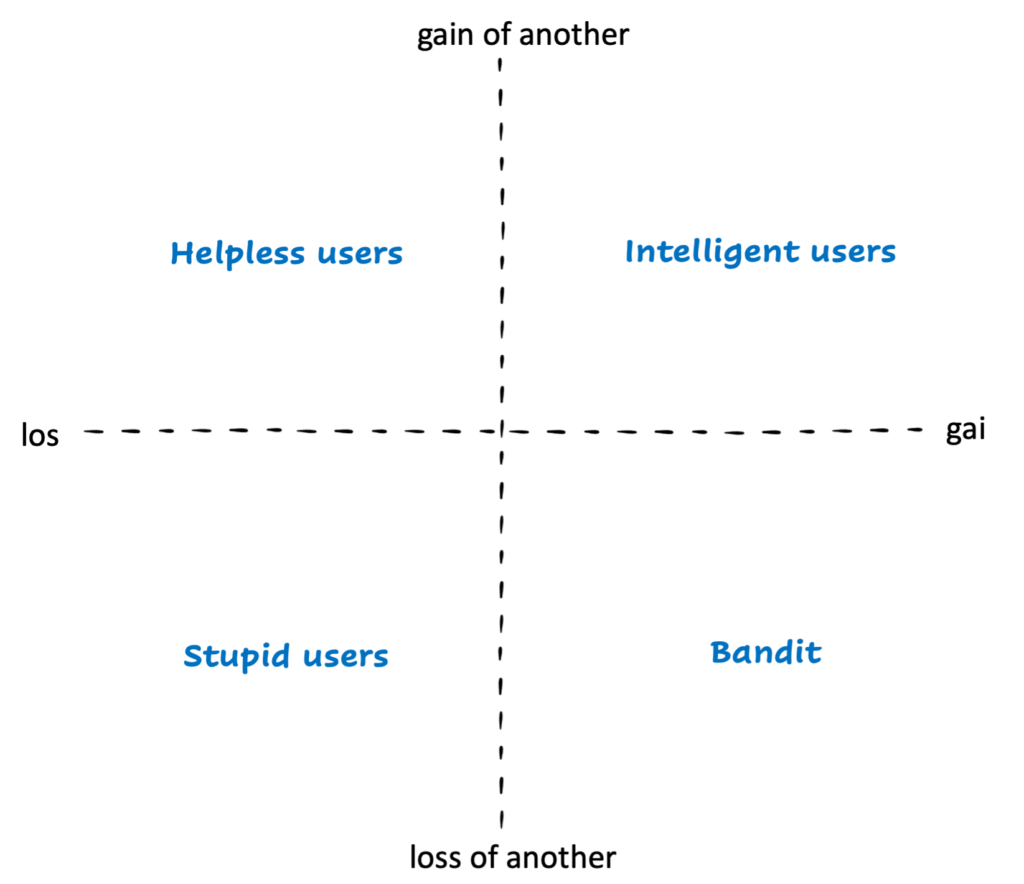

Ever watched a user navigate your meticulously designed interface and wondered, “Why on earth would they do that?” If you have ever felt the frustration of a user skipping a critical onboarding step or clicking the wrong button despite a clear label, Carlo M. Cipolla’s The Basic Laws of Human Stupidity is the diagnostic manual you didn’t know you needed. At its heart, Cipolla provides a behavioral framework that helps us understand why irrational, counterproductive behavior not only exists but persists, even in intelligent systems and well-intentioned groups. This framework categorises human actions into a 2×2 matrix based on their impact: whether an action creates a gain or loss for the individual versus a gain or loss for others. By defining “stupidity” as a specific quadrant – where an individual causes losses to others while gaining nothing or even losing something themselves – Cipolla illustrates that irrationality is a universal constant. For product builders, this means accepting that the Human Operating System is inherently imperfect and that “smart” users are not immune to these systemic glitches.

Cipolla’s Matrix of Human Behavior

Cipolla visualizes human behavior in a simple 2×2 matrix, based on how actions affect the individual and others:

This framework maps neatly onto user archetypes or stakeholder behavior:

This framework maps neatly onto user archetypes or stakeholder behavior:

- Intelligent users → contribute positively to the system (e.g., creators, power users).

- Bandits → exploit it (e.g., spammers, fraudsters).

- Helpless users → depend on your system but get hurt by it (e.g., victims of poor UX).

- Stupid users → harm the ecosystem without realizing it (e.g., spread misinformation, trigger unintended consequences).

Let’s unpack Cipolla’s five laws, and translate them into insights for modern-day technologists, UX designers, and PMs. Understanding these laws moves beyond critique to actionable design. We have a toolkit for working with the human OS, not against it.

Law 1: Everyone Underestimates the Number of Stupid People.

Cipolla’s point: No matter how many times you’re proven wrong, you’ll always underestimate how many people act irrationally, self-destructively, or without logic, and how frequently they do so. This isn’t about education or intellect; it’s a pervasive human trait.

The “Why” (Cognitive Bias): This underestimation often stems from confirmation bias – we subconsciously seek out and remember evidence that confirms our belief that our users are “smart” and will use the product as designed. The availability heuristic also plays a role, as we tend to recall successful, rational interactions more easily than the frustrating, irrational ones. We default to assuming a shared rationality that often isn’t present.

Relevance for tech & product teams: Every system you build will encounter unpredictable user behavior.

i) Users won’t read the instructions.

ii) They’ll click the wrong button, ignore the obvious path, or use your feature in ways you never imagined.

iii) Even worse – they’ll blame you for it.

Design Takeaway: Design and product decisions must assume irrational behavior is not an edge case, it’s the default case. Good UX doesn’t fight stupidity; it anticipates and absorbs it gracefully.

Law 2: The Probability of Stupidity is Independent of Any Other Characteristic.

Stupidity is a universal constant, it has nothing to do with education, income, profession, or status. Smart people can make stupid choices, too.

The “Why” (Human Limitations): Even the brightest individuals operate within inherent human limitations. High cognitive load (too much information to process), attention deficits (distractions, multitasking), intense emotional states (frustration, excitement), or simple ego can all lead to seemingly irrational behavior. It’s not about lacking the capacity for intelligence, but about the variable conditions under which that intelligence is applied.

Relevance for tech & product teams: Don’t over-index on user sophistication. Even the most tech-savvy audience can behave unpredictably when attention, emotion, or ego get in the way. Think of:

i) Engineers forgetting to commit their code.

ii) Designers overwriting live files.

iii) Executives forwarding phishing emails.

Design Takeaway: Recognize that cognitive bias and irrational behavior are fundamental human design constraints. They are features of the human OS, not bugs in your users. Design systems that are forgiving and robust enough to support users across the full spectrum of their cognitive and emotional states, regardless of their perceived intelligence.

Law 3: A Stupid Person Causes Losses to Others While Gaining Nothing (or Even Losing Something)

Cipolla’s point: This is the definition of stupidity: behavior that harms both the actor and others, without any rational benefit. It’s behavior that creates a net negative sum for the system.

The “Why” (Behavioral Economics): This often stems from a lack of foresight, an inability to accurately assess long-term consequences, or a fundamental misunderstanding of complex system dynamics. Myopia regarding future outcomes or a simple inability to model the downstream effects of an action can lead to these seemingly irrational outcomes.

Relevance for product & Tech people: You’ll see this every day:

i) Users ignoring privacy warnings.

ii) Teams over-engineering a feature no one asked for.

iii) Companies launching products that damage trust or user experience.

In UX terms, it’s anti-value creation. In product terms, it’s negative-sum behavior.

Design Takeaway: Design systems that minimize the net damage caused by poor judgment. Use constraints, confirmations, and feedback loops that protect users, and your product, from self-sabotage.

Law 4: Non-Stupid People Underestimate the Destructive Power of Stupid People.

Cipolla’s point: We assume stupidity is harmless. It’s not. Stupid people cause outsized damage precisely because their actions defy prediction and reason.

The “Why” (Risk Perception): Our brains are ill-equipped to deal with non-linear risks and “black swan” events, especially when they stem from seemingly innocuous, irrational human actions. We expect logical failures or malicious attacks, not accidental, widespread damage from well-meaning but misguided actions.

Relevance for product & Tech people: A single, seemingly minor design flaw or an unforeseen user loophole can cascade into major cybersecurity breaches, amplify misinformation, or even cause system-wide collapse. User error, particularly when amplified by scale, has exponential consequences.

Design Takeaway: Always account for the chaos factor in design and technology. User error is not linear, it’s exponential. Stress test for human unpredictability as you would for system load. What happens if a user consistently misinterprets a core function? How does the system degrade under sustained, unintentional misuse?

Law 5: A Stupid Person is the Most Dangerous Kind of Person.

Cipolla’s point: Unlike “bandits” (who gain at others’ expense) or “helpless people” (who lose for others’ gain), stupid people create losses for everyone, including themselves. They act without motive, pattern, or awareness. This absence of rationale makes them uniquely difficult to counter.

The “Why” (Systemic Impact): The danger lies in unintended consequences at scale. Lacking malicious intent, their actions are harder to detect, anticipate, or defend against. This isn’t about malice, but about indifference or simple lack of understanding amplified by powerful tools.

Relevance for product & Tech people: In technology, stupid systems, or misaligned incentives, can replicate this same pattern at scale.

i) When a poorly designed algorithm amplifies misinformation, it’s “stupidity at scale.”

ii) When a UX flow nudges users toward self-harm or addiction, it’s the same principle – not evil, just thoughtless.

Design Takeaway: Technology acts as an amplifier, intensifying either positive or negative human tendencies. When building, it is imperative to embed ethical guardrails and accountability mechanisms. This is about preventing harm born from indifference or oversight, rather than malicious intent, ensuring technology truly serves humanity.

Conclusion: Smarter Systems for Imperfect Humans

Cipolla’s basic laws of human stupidity, though humorous, serve as a profound and enduring reminder: human irrationality is not a flaw to be engineered away, but a fundamental, unchanging aspect of our nature – an immutable characteristic of the human OS. For anyone building products, true design maturity isn’t about striving for a perfect, rational user. It’s about building systems that not only tolerate but gracefully accommodate human unpredictability, cognitive biases, and the occasional, inevitable “stupid” action. It’s about creating technology that understands the full spectrum of human behavior, from the brilliant to the baffling.

Embrace human imperfection. The smarter our systems become, the more profoundly they must understand (and design for) human “stupidity.” It’s not about fixing humans; it’s about building a world, and products, that work with them, fostering a more resilient, intuitive, and ultimately, more human experience. Good systems reward intelligence, limit banditry, protect the helpless, and contain stupidity.

References:

“The Basic Laws of Human Stupidity” by Carlo M. Cipolla

Swipe for more stories

Swipe for more stories

Comments